Hi,

I'm building a simple AI agent and before it does anything else it needs to make a basic decision — should it attempt a task or not?

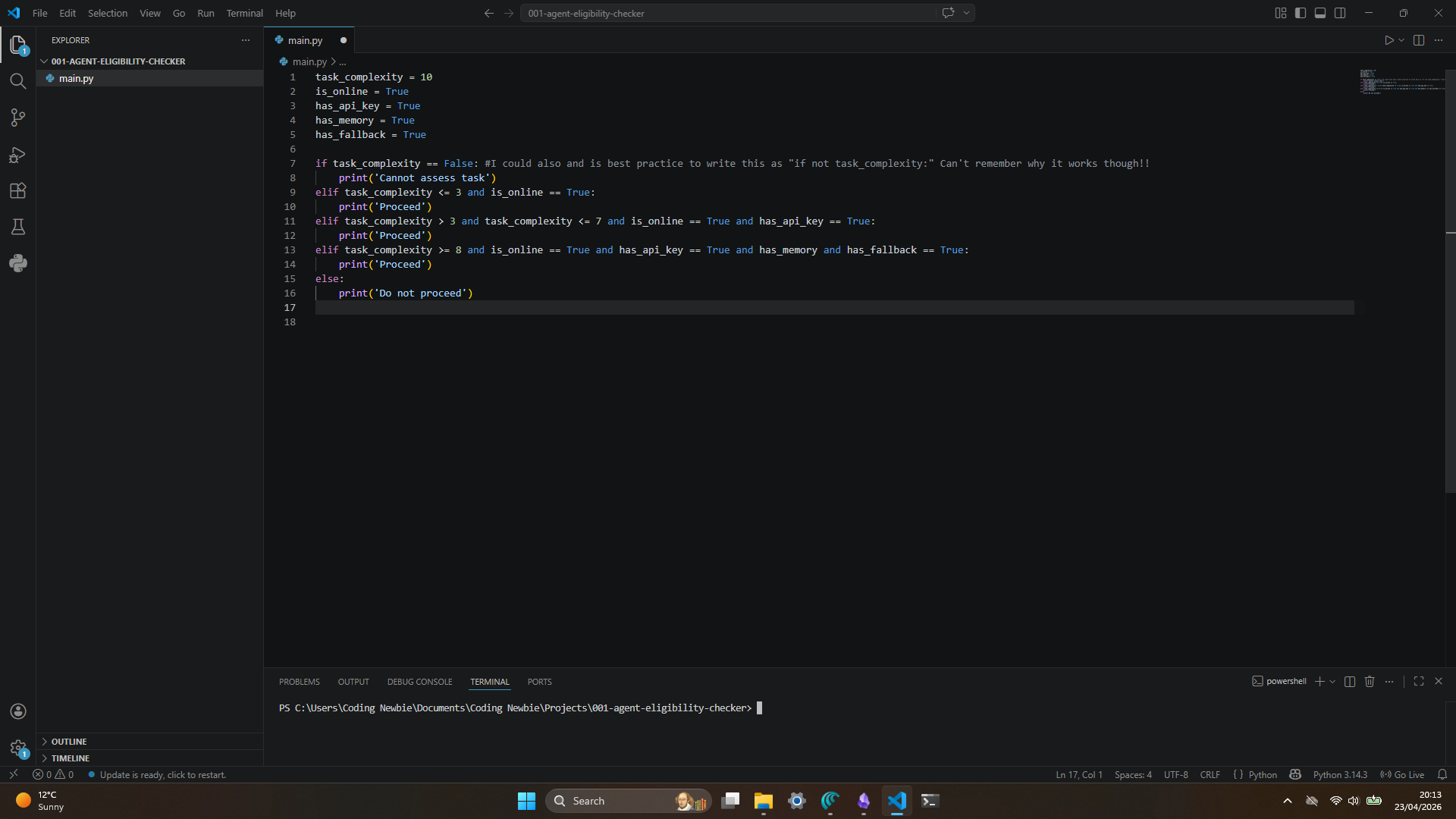

Here's the situation. My agent receives tasks from users but I need it to screen each task before attempting it. I need a simple eligibility checker built in Python that assesses whether the agent should proceed based on the following:

task_complexity— a number representing how complex the task is on a scale of 1-10is_online— whether the agent currently has an internet connectionhas_api_key— whether a valid API key is availablehas_memory— whether the agent has access to its memory/contexthas_fallback— whether a fallback model is available if the primary fails

The rules are:

- If

task_complexityhas no value, printCannot assess task - Complexity of 1-3 — print

Proceedonly if online - Complexity of 4-7 — print

Proceedonly if online and API key available - Complexity of 8-10 — print

Proceedonly if online, API key, memory and fallback are all available - Otherwise print

Do not proceed

This is exactly the kind of decision logic that sits inside real AI agents. Build it, and you've built something genuinely relevant to where this whole plan is heading.

Hi,

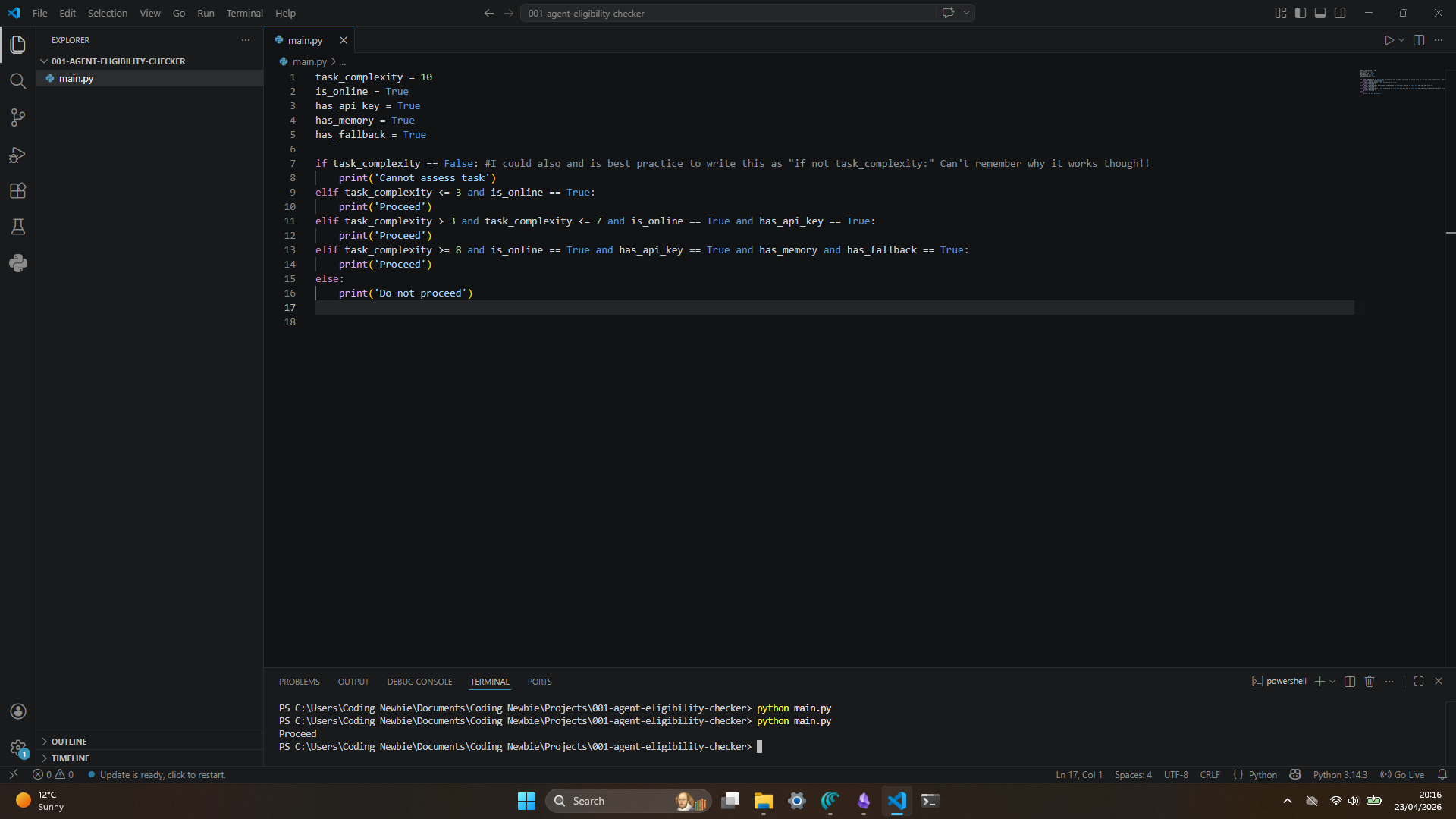

Just had a chance to run this through its paces and I'm happy to confirm it does exactly what I needed.

Tested it across all scenarios — low complexity tasks, mid-range, and the high complexity ones that need the full stack of resources available. It makes the right call every time. The Do not proceed fallback is exactly the safety net I was looking for.

Clean, readable code too — I can see what it's doing without needing it explained to me, which matters when my team need to maintain it.

Consider this signed off. Looking forward to seeing what you build next.